We bring you concise, up-to-the-minute coverage of the founders, funding rounds, and technologies shaping tomorrow. Expect clear explains, deal roundups, and stories that cut through the noise—so you can spot the next big move in tech, fast.

Examining how robots are moving from demonstrations to daily use.

CES 2026 did not frame robotics as a distant future or a technological spectacle. Instead, it highlighted machines designed for the slow, practical work of fitting into human systems. Across the show floor, robots were no longer performing for attention but being shaped by real-world constraints—space, safety, fatigue and repetition.

They appeared in factories, homes, emergency settings and industrial sites, each responding to a specific kind of human limitation. Together, these four robots reveal how robotics is being redefined: not as a replacement for people, but as infrastructure that quietly takes on work humans are least meant to carry alone.

Hyundai Motor unveiled its electric humanoid robot, Atlas, during a media day on January 5, 2026, at the Mandalay Bay Convention Center in Las Vegas as part of CES 2026. Developed with Boston Dynamics, Hyundai’s U.S.-based robotics subsidiary, Atlas was presented in two forms: a research prototype and a commercial model designed for real factory environments.

Shown under the theme “AI Robotics, Beyond the Lab to Life: Partnering Human Progress,” Atlas is designed to work alongside humans rather than replace them. The premise is straightforward—robots take on physically demanding and repetitive tasks such as sorting and assembly, while people focus on work requiring judgment, creativity and decision-making.

Built for industrial use, the commercial version of Atlas is designed to adapt quickly, with Hyundai stating it can learn new tasks within a day. Its adult-sized humanoid form features 56 degrees of freedom, enabling flexible, human-like movement. Tactile sensors in its hands and a 360-degree vision system support spatial awareness and precise operation.

Atlas is also engineered for demanding conditions. It can lift up to 50 kilograms, operate in temperatures ranging from –20°C to 40°C and is waterproof, making it suitable for challenging factory settings.

Looking ahead, Hyundai expects Atlas to begin with parts sorting and sequencing by 2028, move into assembly by 2030 and later take on precision tasks that require sustained physical effort and focus.

Widemount’s Smart Firefighting Robot is designed to operate in environments that are difficult and dangerous for humans to enter. Developed by Widemount Dynamics, a spinout from the Hong Kong Polytechnic University, the robot is built to support emergency teams during fires, particularly in enclosed and smoke-filled spaces.

The robot can move through buildings and industrial facilities even when visibility is near zero. Rather than relying on cameras or GPS, it uses radar-based mapping to understand its surroundings and determine a safe path forward. This allows it to continue operating when smoke, heat or debris would normally restrict access.

As it approaches a fire, the robot analyses the burning object. Its onboard AI helps identify the material involved and selects an appropriate extinguishing method. Sensors simultaneously assess flame intensity and send real-time updates to command centres, giving responders clearer situational awareness.

When actively fighting a fire, the robot can aim directly at the source and deploy extinguishing agents autonomously. The system continuously adjusts its actions based on incoming sensor data, reducing the need for constant human intervention during high-risk situations.

At CES 2026, LG Electronics offered a glimpse into how household work could gradually shift from people to machines. The company introduced LG CLOiD, an AI-powered home robot designed to manage everyday chores by working directly with connected appliances within LG’s ThinQ ecosystem.

Designed for indoor living spaces, CLOiD features a compact upper body with two articulated arms, a head unit and a wheeled base that enables steady movement across floors. Its torso can tilt to adjust height, allowing it to reach items placed low or on kitchen counters. The arms and hands are built for careful handling, enabling the robot to grip common household objects rather than heavy tools. The head also functions as a mobile control unit, housing cameras, sensors, a display and voice interaction capabilities for communication and monitoring.

In practice, CLOiD acts as a task coordinator. It can retrieve items from appliances, operate ovens and washing machines and manage laundry cycles from start to finish, including folding and stacking clothes. By connecting multiple devices through the ThinQ system, the robot turns separate appliances into a single, coordinated workflow.

These capabilities are supported by LG’s Physical AI system. CLOiD uses vision to recognise objects and interpret its surroundings, language processing to understand instructions and action control to execute tasks step by step. Together, these systems allow the robot to follow routines, respond to user input and adjust task execution over time.

Doosan Robotics introduced Scan & Go at CES 2026, an AI-driven robotic system designed to automate large-scale surface repair and inspection. The solution targets environments with complex, irregular surfaces that are difficult to pre-program, such as aircraft structures, wind turbine blades and large industrial installations.

Scan & Go operates by scanning surfaces on site and building an understanding of their shape in real time. Instead of relying on detailed digital models or manual coding, the system plans its movements based on live data. This enables it to adapt to variations in size, curvature and surface condition without extensive setup.

The underlying technology combines 3D sensing with AI-based motion planning. The system interprets surface data, generates tool paths and refines its actions as work progresses. In practical terms, this reduces manual intervention while maintaining consistency across large work areas.

By handling surface preparation and inspection tasks that are time-consuming and physically demanding, Scan & Go is positioned as a support tool for industrial teams operating at scale.

Taken together, these robots signal a clear shift in how machines are being designed and deployed. Across factories, homes, emergency sites and industrial infrastructure, robotics is moving beyond demonstrations and into practical roles that support human work.

The unifying theme is not replacement, but relief—robots taking on tasks that are repetitive, hazardous or physically demanding. CES 2026 suggests that robotics is evolving from spectacle to utility, with a growing focus on systems that adapt to real environments, respond to genuine constraints and integrate into everyday workflows.

Turning computing heat into a practical heating solution for greenhouses.

Most computing systems have one unavoidable side effect: they get hot. That heat is usually treated as a problem and pushed away using cooling systems. Canaan Inc., a technology company that builds high-performance computing machines, is now showing how that same heat can be reused instead of wasted.

In a pilot project in Manitoba, Canada, Canaan is working with greenhouse operator Bitforest Investment to recover heat generated by its computing systems. Rather than focusing only on computing output, the project looks at a more basic question—what happens to all the heat these machines produce and can it serve a practical purpose?

The idea is simple. Canaan’s computers run continuously and naturally generate heat. Instead of releasing that heat into the environment, the system captures it and uses it to warm water. That warm water is then fed into the greenhouse’s existing heating system. As a result, the greenhouse needs less additional energy to maintain the temperatures required for plant growth.

This is enabled through liquid cooling. Instead of using air to cool the machines, a liquid circulates through the system and absorbs heat more efficiently. Because liquid retains heat better than air, the recovered water reaches temperatures that are suitable for industrial use. In effect, the computing system supports greenhouse heating while continuing to perform its primary computing function.

What makes this approach workable is that it integrates with existing infrastructure. The recovered heat does not replace the greenhouse’s boilers but supplements them. By preheating the water that enters the boiler system, the overall energy demand is reduced. Based on current assumptions, Canaan estimates that a significant portion of the electricity used by the servers can be recovered as usable heat, though actual results will be confirmed once the system is fully operational.

This matters because heating is one of the largest energy expenses for commercial greenhouses, particularly in colder regions like Canada. Many facilities still rely heavily on fossil-fuel-based heating and policies such as carbon pricing are encouraging lower-emission alternatives. Reusing computing heat offers a way to improve efficiency without requiring a complete overhaul of existing systems.

The project is planned to run for an initial two-year period, allowing Canaan to evaluate real-world performance factors such as reliability, system stability and maintenance needs. These findings will help determine whether the model can be replicated in other agricultural or industrial settings.

More broadly, the initiative reflects a shift in how computing infrastructure can be designed. Instead of operating as energy-intensive systems isolated from everyday use, computing equipment can contribute to real-world applications. Canaan’s greenhouse pilot highlights how excess heat—often seen as a by-product—can become part of a more efficient and thoughtful energy loop.

In doing so, the project suggests that improving sustainability in technology is not only about reducing energy consumption, but also about finding smarter ways to reuse the energy already being generated.

How ECOPEACE uses autonomous robots and data to monitor and maintain urban water bodies.

South Korea–based water technology company ECOPEACE is working on a practical challenge many cities face today: keeping urban water bodies clean as pollution and algae growth become more frequent. Rather than relying on periodic cleanup drives, the company focuses on systems that can monitor and manage water conditions on an ongoing basis.

At the core of ECOPEACE’s work are autonomous water-cleanup robots known as ECOBOT. These machines operate directly on lakes, reservoirs and rivers, removing algae and surface waste while also collecting information about water quality. The idea is to combine cleaning with constant observation so changes in water conditions do not go unnoticed.

Alongside the robots, ECOPEACE uses a filtration and treatment system designed to process polluted water continuously. This system filters out contaminants using fine metal filters and treats the water using electrical processes. It also cleans itself automatically, which allows it to run for long periods without frequent manual maintenance.

The role of AI in this setup is largely about decision-making rather than direct control. Sensors placed across the water body collect data such as pollution levels and water quality indicators. The software then analyses this data to spot early signs of issues like algae growth. Based on these patterns, the system adjusts how the robots and filtration units operate, such as changing treatment intensity or water flow. In simple terms, the technology helps the system respond sooner instead of waiting for visible problems to appear.

ECOPEACE has already deployed these systems across several reservoirs, rivers and urban waterways in South Korea. Those projects have helped refine how the robots, sensors and software work together in real environments rather than controlled test sites.

Building on that experience, the company has begun expanding beyond Korea. It is currently running pilot and proof-of-concept projects in Singapore and the United Arab Emirates. These deployments are testing how the technology performs in dense urban settings where waterways are closely linked to public health, infrastructure and daily city life.

Both regions have invested heavily in smart city initiatives and water management, making them suitable test beds for automated monitoring and cleanup systems. The pilots focus on algae control, surface cleaning and real-time tracking of water quality rather than large-scale rollout.

As cities continue to grow and climate-related pressures on water systems increase, managing waterways is becoming less about occasional intervention and more about continuous oversight. ECOPEACE’s approach reflects that shift by using automation and data to address problems early and reduce the need for reactive cleanup later.

Sensing technology is facilitating the transition of drone delivery services from trial phases to regular daily operations.

A new partnership between Hesai Technology, a LiDAR solutions company and Keeta Drone, an urban delivery platform under Meituan, offers a glimpse into how drone delivery is moving from experimentation to real-world scale.

Under the collaboration, Hesai will supply solid-state LiDAR sensors for Keeta’s next-generation delivery drones. The goal is to make everyday drone deliveries more reliable as they move from trials to routine operations. Keeta Drone operates in a challenging space—low-altitude urban airspace. Its drones deliver food, medicine and emergency supplies across cities such as Beijing, Shanghai, Hong Kong and Dubai. With more than 740,000 deliveries completed across 65 routes, the company has discontinued testing the concept. It is scaling it. For that scale to work, drones must be able to navigate crowded environments filled with buildings, trees, power lines and unpredictable conditions. This is where Hesai’s technology comes in.

Hesai’s solid-state LiDAR is integrated into Keeta's latest long-range delivery drones. LiDAR stands for Light Detection and Ranging. In simple terms, it is a sensing technology that helps machines understand their surroundings by sending out laser pulses and measuring how they bounce back. Unlike GPS, LiDAR does not rely solely on satellites to determine position. Instead, it gives drones a direct sense of their surroundings, helping them spot small but critical obstacles like wires or tree branches.

In a recent demonstration, Keeta Drone completed a nighttime flight using LiDAR-based navigation alone without relying on cameras or satellite positioning. This shows how the technology can support stable operations even when visibility is poor or GPS signals are limited.

The LiDAR system used in these drones is Hesai’s second-generation solid-state model known as FTX. Compared with earlier versions, the sensor offers higher resolution while being smaller and lighter—important considerations for airborne systems where weight and space are limited. The updated design also reduces integration complexity, making it easier to incorporate into commercial drone platforms. Large-scale production of the sensor is expected to begin in 2026.

From Hesai’s perspective, delivery drones are one of several forms robots are expected to take in the coming decades. Industry forecasts suggest robots will increasingly appear in many roles from industrial systems to service applications, with drones becoming a familiar part of urban infrastructure rather than a novelty.

For Keeta Drone, this improves safety and reliability. And for the broader industry, it signals that drone logistics is entering a more mature phase—one defined less by experimentation and more by dependable execution. Taken together, the partnership highlights a practical evolution in drone delivery.

As cities grow more complex, the question is no longer whether drones can fly but whether they can do so reliably, safely and at scale. At its core, this partnership is not about drones or sensors as products. It is about what it takes to make a complex system work quietly in real cities. As drone delivery moves out of pilot zones and into everyday use, reliability matters more than novelty.

December 31, 2025

Amid AI and tech startups, Eastseabrother proved the power of demand and trust.

At a Silicon Valley pitch event crowded with AI, SaaS and deep-tech startups, the company that stood out was not selling software or algorithms. It was selling pet treats.

Eastseabrother, a premium pet food brand from South Korea, ranked first at a Plug and Play–hosted investor pitch competition in Sunnyvale. The product itself is simple: single-ingredient pet treats made from wild-caught seafood sourced from Korea’s East Sea. The company follows a principle it calls “Only What the Sea Allows”, working directly with regional fishermen while avoiding overfishing. With no additives and minimal processing, what sets Eastseabrother apart is not novelty, but control—over sourcing, supply chains and consistency.

That clarity helped the company walk away with both Best Product and Best Potential. “Investors asked detailed questions about repeat purchase rates and customer feedback, not just our technology or supply chain”, said Eunyul Kim, CEO of Eastseabrother. “That told us the market is shifting—real consumer trust now carries as much weight as a compelling tech narrative”.

What truly caught investors’ attention was not an ambitious vision of the future, but concrete evidence of traction today. Eastseabrother has already secured shelf space in specialty pet stores across California, New York and North Carolina, including an exclusive partnership with EarthWise Pet, a national specialty retail chain. At a consumer showcase at San Francisco’s Ferry Building, the brand recorded the highest on-site sales among all participating companies.

At its core, the pitch was built on simplicity: one ingredient, clear sourcing and a defined customer need. In a market saturated with complex products and abstract claims, that focus and transparency stood out.

The judges’ decision also reflects a broader shift in venture capital thinking. Not every successful startup is built on complex software or high-tech innovation. In categories like pet care—where trust, quality and transparency shape buying behavior—execution and credibility can matter more than technical sophistication.

Today, Eastseabrother has extended its reach beyond the U.S., expanding into Singapore and Hong Kong, with additional plans to grow further in North America as demand for premium pet food rises. And the broader takeaway from this pitch is not that consumer brands are overtaking tech startups. It is that investors are increasingly focused on fundamentals: who is buying, why they are returning and whether the business can sustain itself beyond the pitch deck.

The collaboration between Oversonic Robotics and STMicroelectronics highlights how robotics is beginning to fill gaps traditional automation cannot.

Oversonic Robotics, an Italian company known for building cognitive humanoid robots, has signed an agreement with STMicroelectronics, one of the world’s largest semiconductor manufacturers, to deploy humanoid robots inside semiconductor plants.

According to the companies, this is the first time cognitive humanoid robots will be used operationally inside semiconductor manufacturing facilities. And the first deployment has already taken place at ST’s advanced packaging and test plant in Malta.

At the center of the collaboration is RoBee, Oversonic’s humanoid robot. RoBee is designed to carry out support tasks within industrial environments, particularly where flexibility and interaction with human workers are required. In ST’s factories, the robots will assist with complex manufacturing and logistics flows linked to new semiconductor products. They are intended to work alongside existing automation systems, not replace them.

RoBee is notable for its ability to operate in environments shared with people. It is currently the only humanoid robot certified for use in both industrial and healthcare settings and is already in operation within several Italian companies. The robot is also being used in experimental hospital programs. That background helped position RoBee for deployment in tightly controlled manufacturing environments such as semiconductor plants.

Fabio Puglia, President of Oversonic Robotics, described the agreement as a milestone for deploying humanoid robots in complex industrial settings: “The partnership with STMicroelectronics is a great source of pride for us because it embodies the vision of cognitive robotics that Oversonic has brought to the industrial and healthcare markets. Being the first to introduce cognitive humanoid robots in a sophisticated production context such as semiconductors means measuring ourselves against the highest standards in terms of reliability, safety and operational continuity. This agreement represents a fundamental milestone for Oversonic and, more generally, for the industrial challenges these new machines are called to face in innovative and highly complex environments, alongside people and supporting their quality of work”.

From STMicroelectronics’ side, the use of humanoid robots is framed as part of a broader effort to manage growing manufacturing complexity. he company said RoBee will support complex tasks and help manage the intricate production flows required by newer semiconductor products. It is also expected to contribute to improved product quality and shorter manufacturing cycle times. The robots are designed to integrate with existing automation and software systems, helping improve safety and operational continuity.

In semiconductor manufacturing, precision and reliability leave little room for experimentation. Therefore, introducing humanoid robots into this environment signals a practical shift. It shows how robotics is starting to fill gaps that traditional automation has struggled to address.

December 30, 2025

How Korea is trying to take control of its AI future.

SK Telecom, South Korea’s largest mobile operator, has unveiled A.X K1, a hyperscale artificial intelligence model with 519 billion parameters. The model sits at the center of a government-backed effort to build advanced AI systems and domestic AI infrastructure within Korea. This comes at a time when companies in the United States and China largely dominate the development of the most powerful large language models.

Rather than framing A.X K1 as just another large language model, SK Telecom is positioning it as part of a broader push to build sovereign AI capacity from the ground up. The model is being developed as part of the Korean government’s Sovereign AI Foundation Model project, which aims to ensure that core AI systems are built, trained and operated within the country. In simple terms, the initiative focuses on reducing reliance on foreign AI platforms and cloud-based AI infrastructure, while giving Korea more control over how artificial intelligence is developed and deployed at scale.

One of the gaps this approach is trying to address is how AI knowledge flows across a national ecosystem. Today, the most powerful AI foundation models are often closed, expensive and concentrated within a small number of global technology companies. A.X K1 is designed to function as a “teacher model,” meaning it can transfer its capabilities to smaller, more specialized AI systems. This allows developers, enterprises and public institutions to build tailored AI tools without starting from scratch or depending entirely on overseas AI providers.

That distinction matters because most real-world applications of artificial intelligence do not require massive models operating independently. They require focused, reliable AI systems designed for specific use cases such as customer service, enterprise search, manufacturing automation or mobility. By anchoring those systems to a large, domestically developed foundation model, SK Telecom and its partners are aiming to create a more resilient and self-sustaining AI ecosystem.

The effort also reflects a shift in how AI is being positioned for everyday use. SK Telecom plans to connect A.X K1 to services that already reach millions of users, including its AI assistant platform A., which operates across phone calls, messaging, web services and mobile applications. The broader goal is to make advanced AI feel less like a distant research asset and more like an embedded digital infrastructure that supports daily interactions.

This approach extends beyond consumer-facing services. Members of the SKT consortium are testing how the hyperscale AI model can support industrial and enterprise applications, including manufacturing systems, game development, robotics and autonomous technologies. The underlying logic is that national competitiveness in artificial intelligence now depends not only on model performance, but on whether those models can be deployed, adapted and validated in real-world environments.

There is also a hardware dimension to the project. Operating an AI model at the 500-billion-parameter scale places heavy demands on computing infrastructure, particularly memory performance and communication between processors. A.X K1 is being used to test and validate Korea’s semiconductor and AI chip capabilities under real workloads, linking large-scale AI software development directly to domestic semiconductor innovation.

The initiative brings together technology companies, universities and research institutions, including Krafton, KAIST and Seoul National University. Each contributes specialized expertise ranging from data validation and multimodal AI research to system scalability. More than 20 institutions have already expressed interest in testing and deploying the model, reinforcing the idea that A.X K1 is being treated as shared national AI infrastructure rather than a closed commercial product.

Looking ahead, SK Telecom plans to release A.X K1 as open-source AI software, alongside APIs and portions of the training data. If fully implemented, the move could lower barriers for developers, startups and researchers across Korea’s AI ecosystem, enabling them to build on top of a large-scale foundation model without incurring the cost and complexity of developing one independently.

From AI love affairs to cosmic survival, 2026 has it all.

Grab your popcorn—the 2026 sci-fi movie slate is stacked. We’re getting everything from post-apocalyptic survival films to AI thrillers, plus a big-space adventure and a fresh DC superhero story. Some films launch new worlds, others expand familiar ones, but all of them aim to leave an impression, but all of them look like the kind of movies you’ll want to talk about after the credits.

Here are five upcoming sci-fi movies to mark on your calendar.

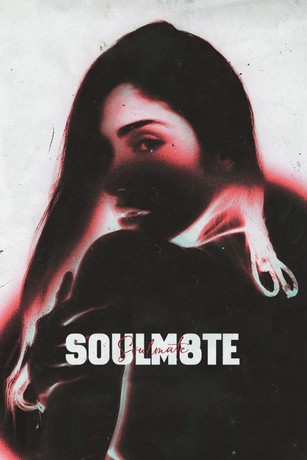

Release Date: January 9, 2026

Director: Kate Dolan

Stars: Lily Sullivan, David Rysdahl and Claudia Doumit

If you like your sci-fi with a creepy edge, Soulm8te is very much in that lane. A spin-off from the M3GAN universe, the film follows a man grieving the loss of his wife who turns to an AI android to ease the pain. At first, it seems to help. The connection feels real, even comforting. But before long, it becomes a little too real and slips into something far more dangerous. What makes Soulm8te unsettling is how close it feels to the present. AI companions are no longer science fiction, and the film plays with that reality in a way that feels intimate rather than futuristic. Directed by Kate Dolan, the story stays on quiet unease instead of spectacle, allowing tension to build as affection turns possessive and attachment becomes dangerous. The film is produced by Allison Williams and James Wan, both closely involved in the hit horror franchise M3GAN, and their experience with technology-driven horror is clearly felt here. Fans of grounded, psychological sci-fi should keep this one on their radar.

Release Date: January 9, 2026

Director: Ric Roman Waugh

Stars: Gerard Butler, Morena Baccarin, Amber Rose Revah, Sophie Thompson, Trond Fausa Aurvåg

Back in 2020, Greenland introduced audiences to John Garrity (Gerard Butler), a father racing against time to save his family as comet fragments threatened to wipe out life on Earth. The film ended with survivors heading into bunkers deep in Greenland, hanging on to the last thin thread of hope. This sequel follows the Garrity family as they leave the safety of underground shelters and face a world that no longer resembles home. The setting moves across a battered Europe, where every decision carries weight and every journey feels uncertain. Rather than repeating the ticking-clock chaos of the original, Migration leans into endurance, exhaustion and the question of whether rebuilding is even possible. It’s a post-apocalyptic movie about movement, loss and the cost of starting over.

Release Date: March 20, 2026

Director: Phil Lord & Chris Miller

Stars: Ryan Gosling, Milana Vayntrub, Sandra Hüller

Based on Andy Weir’s best-selling novel, Project Hail Mary is shaping up to be one of the most talked-about space survival films of 2026. Ryan Gosling stars as Ryland Grace, an unlikely astronaut whose journey into space begins with confusion rather than heroics. Grace, a former junior high science teacher, wakes up alone on a spacecraft, cut off from Earth and missing key memories about how he got there. As pieces slowly fall into place, so does the scale of the problem he’s been sent to solve. The film blends real science with high-stakes isolation, balancing quiet moments with the pressure of a mission that affects the entire planet. Directed by Phil Lord and Chris Miller, Project Hail Mary promises tension, curiosity and a heavy dose of human vulnerability set against the vastness of space.

Release Date: March 27, 2026

Director: Ridley Scott

Stars: Jacob Elordi, Josh Brolin

The Dog Stars strips the apocalypse down to its bare essentials. Based on Peter Heller’s novel of the same name, the film features a screenplay by Mark L. Smith and Christopher Wilkinson, known for The Revenant and Ali. The setup of The Dog Stars is simple and bleak: a virus has erased most of humanity. What’s left is silence, abandoned airfields and roaming scavengers known as the “Reapers” who prey on the few survivors left behind.

Jacob Elordi plays Hig, a pilot living in isolation with his dog and a heavily armed companion. His days follow a strict routine, broken only by short flights in his aging Cessna. That fragile balance shatters when a distant radio signal breaks through the quiet. It’s the first real sign of life he has heard in years, and it draws him toward a journey that could change everything. Directed by Ridley Scott, the film focuses less on large-scale destruction and more on loneliness, hope and the risk of reaching out in a broken world. The result is a post-apocalyptic thriller that feels intimate, reflective and quietly tense.

Release Date: June 26, 2026

Director: Craig Gillespie

Stars: Milly Alcock, Jason Momoa, Matthias Schoenaerts

Supergirl: Woman of Tomorrow offers a very different take on the DC universe. This is a cosmic sci-fi story first, superhero film second. Kara Zor-El is older, tougher and shaped by memories of a world she lost. Unlike her cousin Superman, she remembers the destruction of Krypton clearly, and that history weighs heavily on her. The film follows Kara as she crosses paths with a young alien seeking justice, pulling her into a dangerous journey across distant worlds. Rather than focusing on Earth-saving spectacle, the story explores identity, grief and what heroism looks like far from home. With Milly Alcock stepping into the role, Supergirl 2026 aims to expand DC’s sci-fi side while giving the character emotional depth rarely seen on screen.

One reason science fiction movies stick with us is that they ask big questions in a way that feels personal. What happens when tech starts filling emotional gaps? What does survival look like when the world doesn’t bounce back? And how far would you go to save everyone you’ve ever known? If you’re looking for 2026 sci-fi movies that range from gritty to hopeful to unsettling, this lineup has you covered.

A new bet on early heart failure detection and why women’s health is at the center.

Heart disease does not always announce itself clearly, especially in women. Many of the symptoms are ordinary, including fatigue, shortness of breath and swelling. These signs are frequently dismissed or explained away. As a result, many women are diagnosed late, when treatment options are narrower and outcomes are worse. That diagnostic gap is the context behind a recent investment involving Ultromics and the American Heart Association’s Go Red for Women Venture Fund.

Ultromics is a health technology company that uses artificial intelligence to help doctors spot early signs of heart failure from routine heart scans. It has received a strategic investment from the American Heart Association’s Go Red for Women Venture Fund.

The focus of the investment is a long-standing blind spot in cardiac care. Heart failure with preserved ejection fraction, or HFpEF, affects millions of people worldwide, with women disproportionately impacted. It is one of the most common forms of heart failure, yet also one of the hardest to diagnose. Studies even show women are twice as likely as men to develop the condition and around 64% of cases go undiagnosed in routine clinical practice.

Ultromics works with a tool most patients already experience during heart care: the echocardiogram. There is no new scan and no added burden for patients. Its software analyzes standard heart ultrasound images and looks for subtle patterns that point to early heart failure. The goal is clarity. Give clinicians better signals earlier, before the disease advances.

“Heart failure with preserved ejection fraction is one of the most complex and overlooked diseases in cardiology. For too long, clinicians have been expected to diagnose it using tools that weren't built to detect it and as a result, many patients are identified too late,” said Ross Upton, PhD, CEO and Founder of Ultromics. “By augmenting physicians' decision making with EchoGo, we can help them recognize disease at an earlier stage and treat it more effectively.”

The stakes are high. Research suggests women are twice as likely as men to develop the condition and that a majority of cases are missed in routine clinical practice. That delay matters. New therapies can reduce hospitalizations and improve survival, but only if patients are diagnosed in time.

This is why early detection has become a priority for mission-driven investors. “Closing the diagnostic gap by recognizing disease before irreversible damage occurs is critical to improving health for women—and everyone,” said Tracy Warren, Senior Managing Director, Go Red for Women Venture Fund. “We are gratified to see technologies, such as this one, that are accepted by leading institutions as advances in the field of cardiovascular diagnostics. That's the kind of progress our fund was created to accelerate.”

Ultromics’ platform is already cleared by regulators for clinical use and is being deployed in hospitals across the US and UK. The company says its technology has analyzed hundreds of thousands of heart scans, helping clinicians reach clearer conclusions when traditional methods fall short.

Taken together, the investment reflects a broader shift in healthcare. Attention is shifting earlier—toward detection instead of reaction. Toward tools that fit into existing care rather than complicate it. In this case, the funding is not about introducing something new into the system. It is about seeing what has long been missed—and doing so in time.

From TV to YouTube, the Oscars’ global shift reveals how entertainment, access and platforms are reshaping cultural institutions.

The Oscars are moving to YouTube. Beginning in 2029, the Academy of Motion Picture Arts and Sciences has signed a multi-year agreement that makes YouTube the exclusive global home of the Oscars through 2033. From the ceremony itself to red carpet coverage, behind-the-scenes access and the Governors Ball, the entire experience will live on a platform most people already open every day.

On the surface, it looks like a distribution shift. In reality, it signals a broader strategic reset. For decades, television delivered scale for cultural institutions. Today, reach and discovery live on platforms, not channels. By choosing YouTube, the Academy is quietly acknowledging that cultural relevance today is built where audiences already are. In that context, YouTube is no longer just a place to watch clips but an emerging piece of cultural infrastructure.

What also stands out is how the Oscars are being reframed. This partnership is not limited to one night a year. Alongside the ceremony, YouTube will host year-round Academy programming through the Oscars YouTube channel. That includes nominations announcements, the Governors Awards, the Student Academy Awards, the Scientific and Technical Awards, filmmaker interviews, podcasts and education programs. Instead of a single broadcast moment, the Oscars are turning into an always-on ecosystem.

Accessibility is another central pillar of the deal. The Oscars will be free to watch globally, supported by closed captioning and audio tracks in multiple languages. This is less about nice-to-have features and more about staying relevant in a global, digital-first world. Younger audiences and viewers outside traditional Western markets expect access by default. The Academy is clearly building with that expectation in mind.

There is also a deeper exchange happening between heritage and technology. YouTube gains cultural weight by hosting one of the world’s most established creative institutions. The Academy, in turn, gains technological legitimacy and a clearer path into the future.

That balance extends to how the transition is being handled. The Academy’s domestic broadcast partnership with Disney ABC will continue through the 100th Oscars in 2028 and the international arrangement with Disney’s Buena Vista International remains in place until then. This is not an abrupt break from legacy media but a carefully phased shift. Change is being managed without burning bridges.

“We are thrilled to enter into a multifaceted global partnership with YouTube to be the future home of the Oscars and our year-round Academy programming,” said Academy CEO Bill Kramer and Academy President Lynette Howell Taylor. “The Academy is an international organization and this partnership will allow us to expand access to the work of the Academy to the largest worldwide audience possible — which will be beneficial for our Academy members and the film community. This collaboration will leverage YouTube’s vast reach and infuse the Oscars and other Academy programming with innovative opportunities for engagement while honoring our legacy. We will be able to celebrate cinema, inspire new generations of filmmakers and provide access to our film history on an unprecedented global scale.”

From YouTube’s side, the partnership places the platform firmly in the center of global cultural moments. “The Oscars are one of our essential cultural institutions, honoring excellence in storytelling and artistry,” said Neal Mohan, CEO, YouTube. “Partnering with the Academy to bring this celebration of art and entertainment to viewers all over the world will inspire a new generation of creativity and film lovers while staying true to the Oscars’ storied legacy.”

Google Arts & Culture extends the partnership beyond the ceremony. Select Academy Museum exhibitions and materials from the Academy’s 52-million-item collection will be made digitally accessible worldwide, bringing film history and education onto the same platform.

Taken together, the deal is less about where the Oscars will stream and more about how cultural institutions are adapting to the changing landscape. The Academy is positioning itself to be present year-round, globally accessible and aligned with the platforms that shape everyday viewing.