What Overstory’s vegetation intelligence reveals about wildfire and outage risk.

Updated

January 15, 2026 8:03 PM

Aerial photograph of a green field. PHOTO: UNSPLASH

Managing vegetation around power lines has long been one of the biggest operational challenges for utilities. A single tree growing too close to electrical infrastructure can trigger outages or, in the worst cases, spark fires. With vast service territories, shifting weather patterns and limited visibility into changing landscape conditions, utilities often rely on inspections and broad wildfire-risk maps that provide only partial insight into where the most serious threats actually are.

Overstory, a company specializing in AI-powered vegetation intelligence, addresses this visibility gap with a platform that uses high-resolution satellite imagery and machine-learning models to interpret vegetation conditions in detail.Instead of assessing risk by region, terrain type or outdated maps, the system evaluates conditions tree by tree. This helps utilities identify precisely where hazards exist and which areas demand immediate intervention—critical in regions where small variations in vegetation density, fuel type or moisture levels can influence how quickly a spark might spread.

At the core of this technology is Overstory’s proprietary Fuel Detection Model, designed to identify vegetation most likely to ignite or accelerate wildfire spread. Unlike broad, publicly available fire-risk maps, the model analyzes the specific fuel conditions surrounding electrical infrastructure. By pinpointing exact locations where certain fuel types or densities create elevated risk, utilities can plan targeted wildfire-mitigation work rather than relying on sweeping, resource-heavy maintenance cycles.

This data-driven approach is reshaping how utilities structure vegetation-management programs. Having visibility into where risks are concentrated—and which trees or areas pose the highest threat—allows teams to prioritize work based on measurable evidence. For many utilities, this shift supports more efficient crew deployment, reduces unnecessary trims and builds clearer justification for preventive action. It also offers a path to strengthening grid reliability without expanding operational budgets.

Overstory’s recent US$43 million Series B funding round, led by Blume Equity with support from Energy Impact Partners and existing investors, reflects growing interest in AI tools that translate environmental data into actionable wildfire-prevention intelligence. The investment will support further development of Overstory’s risk models and help expand access to its vegetation-intelligence platform.

Yet the company’s focus remains consistent: giving utilities sharper, real-time visibility into the landscapes they manage. By converting satellite observations into clear and actionable insights, Overstory’s AI system provides a more informed foundation for decisions that impact grid safety and community resilience. In an environment where a single missed hazard can have far-reaching consequences, early and precise detection has become an essential tool for preventing wildfires before they start.

Keep Reading

A new safety layer aims to help robots sense people in real time without slowing production

Updated

February 13, 2026 10:44 AM

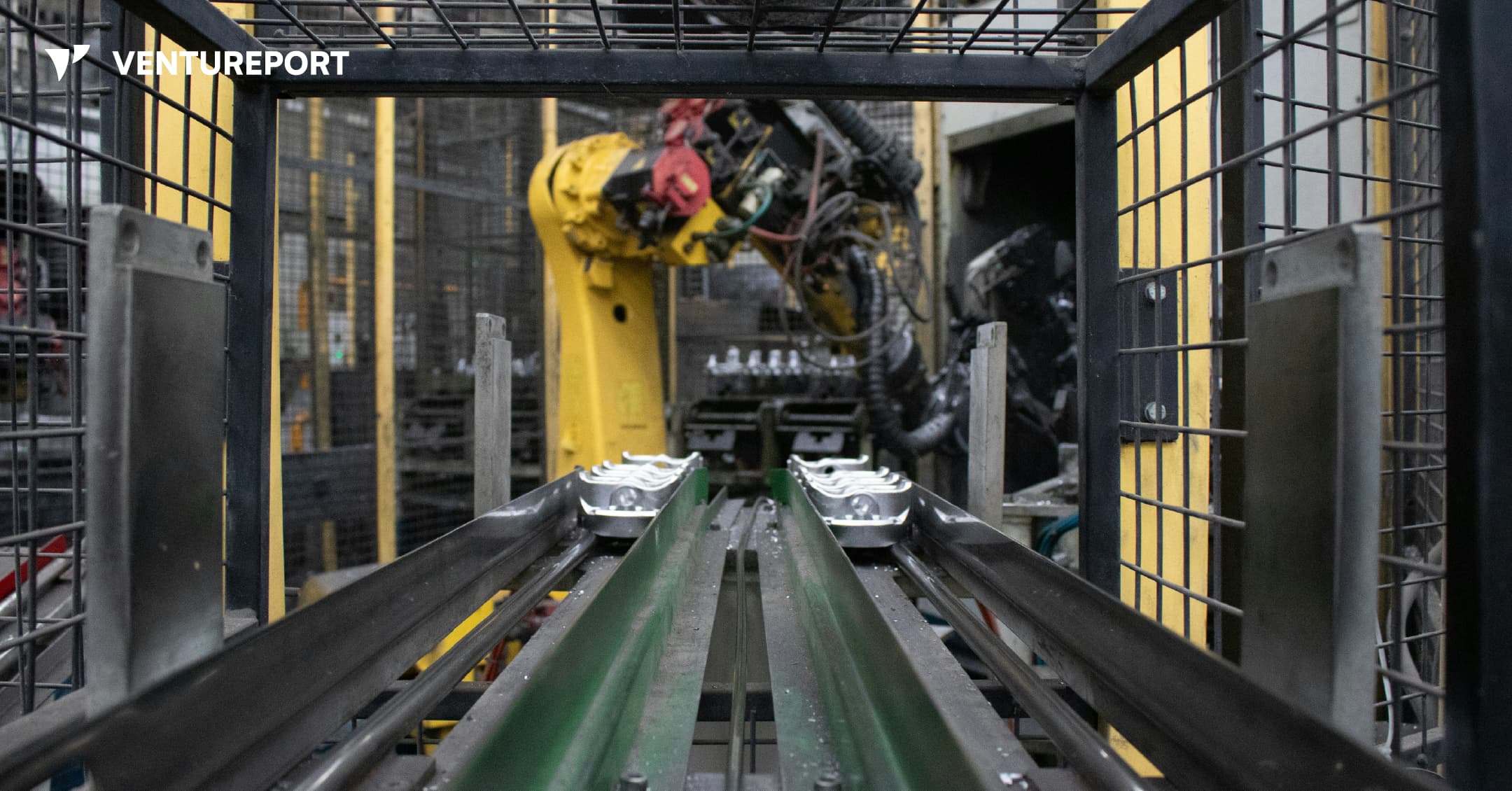

An industrial robot in a factory. PHOTO: UNSPLASH

Algorized has raised US$13 million in a Series A round to advance its AI-powered safety and sensing technology for factories and warehouses. The California- and Switzerland-based robotics startup says the funding will help expand a system designed to transform how robots interact with people. The round was led by Run Ventures, with participation from the Amazon Industrial Innovation Fund and Acrobator Ventures, alongside continued backing from existing investors.

At its core, Algorized is building what it calls an intelligence layer for “physical AI” — industrial robots and autonomous machines that function in real-world settings such as factories and warehouses. While generative AI has transformed software and digital workflows, bringing AI into physical environments presents a different challenge. In these settings, machines must not only complete tasks efficiently but also move safely around human workers.

This is where a clear gap exists. Today, most industrial robots rely on camera-based monitoring systems or predefined safety zones. For instance, when a worker steps into a marked area near a robotic arm, the system is programmed to slow down or stop the machine completely. This approach reduces the risk of accidents. However, it also means production lines can pause frequently, even when there is no immediate danger. In high-speed manufacturing environments, those repeated slowdowns can add up to significant productivity losses.

Algorized’s technology is designed to reduce that trade-off between safety and efficiency. Instead of relying solely on cameras, the company utilizes wireless signals — including Ultra-Wideband (UWB), mmWave, and Wi-Fi — to detect movement and human presence. By analysing small changes in these radio signals, the system can detect motion and breathing patterns in a space. This helps machines determine where people are and how they are moving, even in conditions where cameras may struggle, such as poor lighting, dust or visual obstruction.

Importantly, this data is processed locally at the facility itself — not sent to a remote cloud server for analysis. In practical terms, this means decisions are made on-site, within milliseconds. Reducing this delay, or latency, allows robots to adjust their movements immediately instead of defaulting to a full stop. The aim is to create machines that can respond smoothly and continuously, rather than reacting in a binary stop-or-go manner.

With the new funding, Algorized plans to scale commercial deployments of its platform, known as the Predictive Safety Engine. The company will also invest in refining its intent-recognition models, which are designed to anticipate how humans are likely to move within a workspace. In parallel, it intends to expand its engineering and support teams across Europe and the United States. These efforts build on earlier public demonstrations and ongoing collaborations with manufacturing partners, particularly in the automotive and industrial sectors.

For investors, the appeal goes beyond safety compliance. As factories become more automated, even small improvements in uptime and workflow continuity can translate into meaningful financial gains. Because Algorized’s system works with existing wireless infrastructure, manufacturers may be able to upgrade machine awareness without overhauling their entire hardware setup.

More broadly, the company is addressing a structural limitation in industrial automation. Robotics has advanced rapidly in precision and power, yet human-robot collaboration is still governed by rigid safety systems that prioritise stopping over adapting. By combining wireless sensing with edge-based AI models, Algorized is attempting to give machines a more continuous awareness of their surroundings from the start.