AI actor Tilly Norwood releases a musical video arguing that artificial intelligence can expand creativity in film

Updated

March 13, 2026 2:18 PM

AI Actor Tilly Norwood. PHOTO: INSTAGRAM@TILLYNORWOOD

As Hollywood prepares for this weekend’s Oscars, a different kind of performer is stepping into the spotlight — one that doesn’t physically exist.

Tilly Norwood, described as the world’s first AI actor, has released her debut musical comedy video, Take the Lead. The project arrives at a moment when artificial intelligence has become one of the most contentious topics in the film industry.

The message of the song is simple. AI should not be seen as a threat to actors. Instead, it can become another creative tool. The release also offers a first look at what Norwood’s creators call the “Tillyverse”. It is envisioned as a cloud-based entertainment world where AI characters can live, interact and perform.

Behind the character is actor and producer Eline van der Velden. She is the CEO of production company Particle6 and AI talent studio Xicoia. Van der Velden created Tilly as a way to experiment with how artificial intelligence could be used in storytelling.

The timing is not accidental. The entertainment industry has spent the past few years debating the role AI should play in filmmaking and acting. Questions about digital replicas, automated performances and creative ownership continue to divide artists and studios.

Norwood’s musical video enters that debate with a different tone. Instead of warning about AI replacing actors, the project suggests that the technology could expand what performers are able to do.

The video itself also serves as a technical experiment. The song Take the Lead was generated using the AI music platform Suno. The video was then produced using a combination of widely available AI tools and Particle6’s own creative process.

One of the newer techniques used in the project is performance capture. Van der Velden physically acted out Tilly’s movements and expressions so the digital character could mirror a human performance. But the production was far from automated. According to Particle6, a team of 18 people worked on the video. The group included a director, editor, production designer, costume designer, comedy writer and creative technologist. In other words, the project still relied heavily on human creativity.

“Tilly has always been a vehicle to test the creative capabilities and boundaries of AI,” van der Velden said. “It’s not about taking anyone’s job”. She added that even with powerful tools, good AI content still takes time, taste and creative direction.

The project also reflects how quickly production technology is evolving. Tools that once required large studios are now accessible to smaller creative teams experimenting with AI-driven storytelling.

For Particle6, the character of Tilly Norwood acts as a testing ground. Each project explores how AI performers might be developed, directed and integrated into entertainment. Whether audiences embrace digital actors remains an open question. Many in the industry are still wary of how AI could reshape creative work.

But projects like Take the Lead show another possibility. Instead of replacing performers, artificial intelligence could become part of the creative process itself. In that sense, Tilly Norwood may represent something more than a virtual performer. She is also an experiment in how humans and machines might collaborate in the future of entertainment.

Keep Reading

A new safety layer aims to help robots sense people in real time without slowing production

Updated

February 13, 2026 10:44 AM

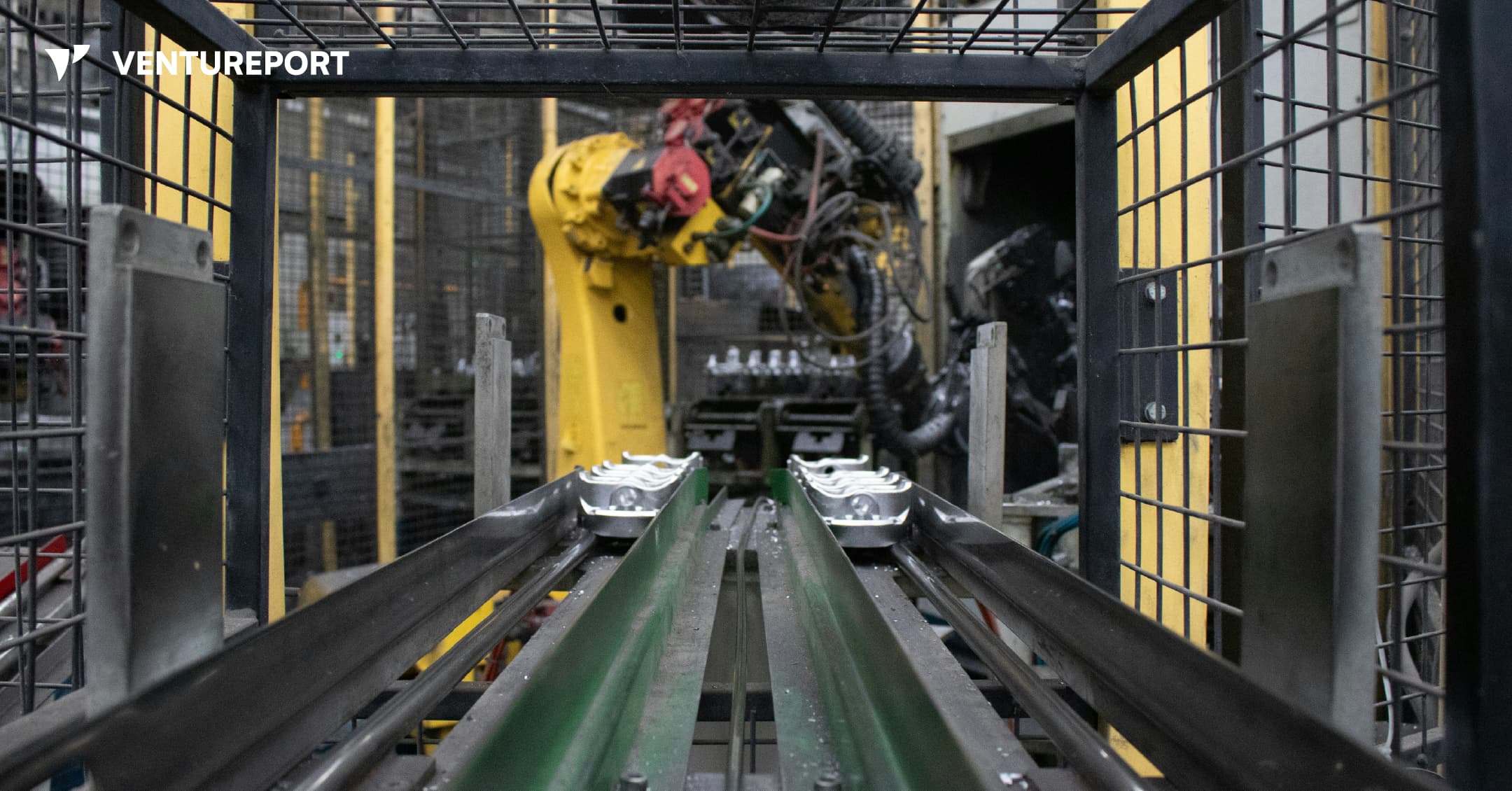

An industrial robot in a factory. PHOTO: UNSPLASH

Algorized has raised US$13 million in a Series A round to advance its AI-powered safety and sensing technology for factories and warehouses. The California- and Switzerland-based robotics startup says the funding will help expand a system designed to transform how robots interact with people. The round was led by Run Ventures, with participation from the Amazon Industrial Innovation Fund and Acrobator Ventures, alongside continued backing from existing investors.

At its core, Algorized is building what it calls an intelligence layer for “physical AI” — industrial robots and autonomous machines that function in real-world settings such as factories and warehouses. While generative AI has transformed software and digital workflows, bringing AI into physical environments presents a different challenge. In these settings, machines must not only complete tasks efficiently but also move safely around human workers.

This is where a clear gap exists. Today, most industrial robots rely on camera-based monitoring systems or predefined safety zones. For instance, when a worker steps into a marked area near a robotic arm, the system is programmed to slow down or stop the machine completely. This approach reduces the risk of accidents. However, it also means production lines can pause frequently, even when there is no immediate danger. In high-speed manufacturing environments, those repeated slowdowns can add up to significant productivity losses.

Algorized’s technology is designed to reduce that trade-off between safety and efficiency. Instead of relying solely on cameras, the company utilizes wireless signals — including Ultra-Wideband (UWB), mmWave, and Wi-Fi — to detect movement and human presence. By analysing small changes in these radio signals, the system can detect motion and breathing patterns in a space. This helps machines determine where people are and how they are moving, even in conditions where cameras may struggle, such as poor lighting, dust or visual obstruction.

Importantly, this data is processed locally at the facility itself — not sent to a remote cloud server for analysis. In practical terms, this means decisions are made on-site, within milliseconds. Reducing this delay, or latency, allows robots to adjust their movements immediately instead of defaulting to a full stop. The aim is to create machines that can respond smoothly and continuously, rather than reacting in a binary stop-or-go manner.

With the new funding, Algorized plans to scale commercial deployments of its platform, known as the Predictive Safety Engine. The company will also invest in refining its intent-recognition models, which are designed to anticipate how humans are likely to move within a workspace. In parallel, it intends to expand its engineering and support teams across Europe and the United States. These efforts build on earlier public demonstrations and ongoing collaborations with manufacturing partners, particularly in the automotive and industrial sectors.

For investors, the appeal goes beyond safety compliance. As factories become more automated, even small improvements in uptime and workflow continuity can translate into meaningful financial gains. Because Algorized’s system works with existing wireless infrastructure, manufacturers may be able to upgrade machine awareness without overhauling their entire hardware setup.

More broadly, the company is addressing a structural limitation in industrial automation. Robotics has advanced rapidly in precision and power, yet human-robot collaboration is still governed by rigid safety systems that prioritise stopping over adapting. By combining wireless sensing with edge-based AI models, Algorized is attempting to give machines a more continuous awareness of their surroundings from the start.