A new safety layer aims to help robots sense people in real time without slowing production

Updated

March 17, 2026 1:02 AM

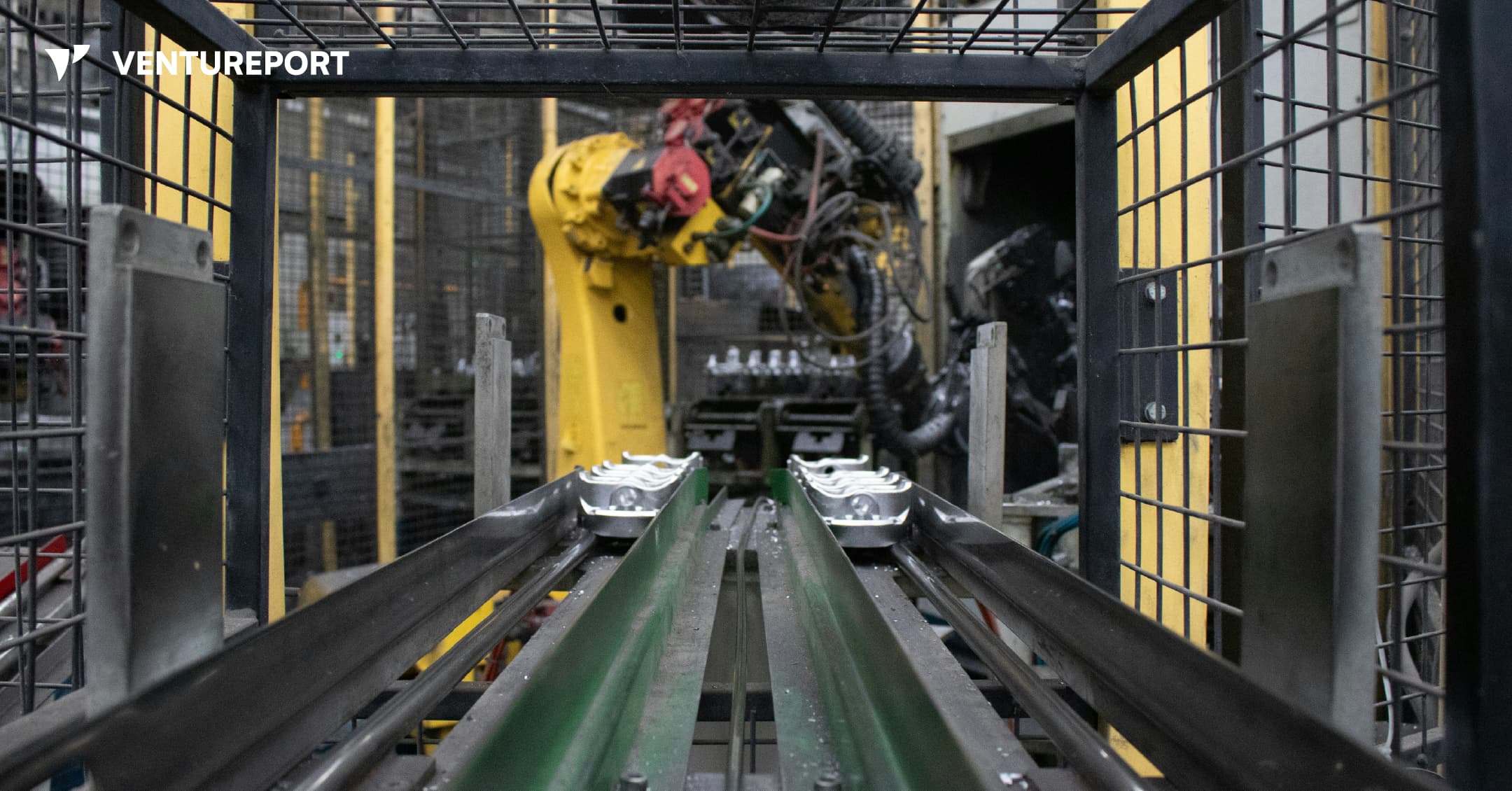

An industrial robot in a factory. PHOTO: UNSPLASH

Algorized has raised US$13 million in a Series A round to advance its AI-powered safety and sensing technology for factories and warehouses. The California- and Switzerland-based robotics startup says the funding will help expand a system designed to transform how robots interact with people. The round was led by Run Ventures, with participation from the Amazon Industrial Innovation Fund and Acrobator Ventures, alongside continued backing from existing investors.

At its core, Algorized is building what it calls an intelligence layer for “physical AI” — industrial robots and autonomous machines that function in real-world settings such as factories and warehouses. While generative AI has transformed software and digital workflows, bringing AI into physical environments presents a different challenge. In these settings, machines must not only complete tasks efficiently but also move safely around human workers.

This is where a clear gap exists. Today, most industrial robots rely on camera-based monitoring systems or predefined safety zones. For instance, when a worker steps into a marked area near a robotic arm, the system is programmed to slow down or stop the machine completely. This approach reduces the risk of accidents. However, it also means production lines can pause frequently, even when there is no immediate danger. In high-speed manufacturing environments, those repeated slowdowns can add up to significant productivity losses.

Algorized’s technology is designed to reduce that trade-off between safety and efficiency. Instead of relying solely on cameras, the company utilizes wireless signals — including Ultra-Wideband (UWB), mmWave, and Wi-Fi — to detect movement and human presence. By analysing small changes in these radio signals, the system can detect motion and breathing patterns in a space. This helps machines determine where people are and how they are moving, even in conditions where cameras may struggle, such as poor lighting, dust or visual obstruction.

Importantly, this data is processed locally at the facility itself — not sent to a remote cloud server for analysis. In practical terms, this means decisions are made on-site, within milliseconds. Reducing this delay, or latency, allows robots to adjust their movements immediately instead of defaulting to a full stop. The aim is to create machines that can respond smoothly and continuously, rather than reacting in a binary stop-or-go manner.

With the new funding, Algorized plans to scale commercial deployments of its platform, known as the Predictive Safety Engine. The company will also invest in refining its intent-recognition models, which are designed to anticipate how humans are likely to move within a workspace. In parallel, it intends to expand its engineering and support teams across Europe and the United States. These efforts build on earlier public demonstrations and ongoing collaborations with manufacturing partners, particularly in the automotive and industrial sectors.

For investors, the appeal goes beyond safety compliance. As factories become more automated, even small improvements in uptime and workflow continuity can translate into meaningful financial gains. Because Algorized’s system works with existing wireless infrastructure, manufacturers may be able to upgrade machine awareness without overhauling their entire hardware setup.

More broadly, the company is addressing a structural limitation in industrial automation. Robotics has advanced rapidly in precision and power, yet human-robot collaboration is still governed by rigid safety systems that prioritise stopping over adapting. By combining wireless sensing with edge-based AI models, Algorized is attempting to give machines a more continuous awareness of their surroundings from the start.

Keep Reading

Structured AI interviews and human judgment combine to address the global talent shortage

Updated

April 1, 2026 8:56 AM

ManpowerGroup World Headquarters in Milwaukee. PHOTO: ADOBE STOCK

As hiring pressures mount across global markets, ManpowerGroup is turning to technology to strengthen how it connects people to work. The workforce solutions major has announced a global partnership with Hubert, a startup focused on AI-driven structured interviews. The aim is simple: make hiring faster and fairer, without removing the human touch.

ManpowerGroup has spent decades operating at the center of the global labor market. The company works with employers across industries to fill roles, manage workforce planning and build talent pipelines. With millions of placements each year, it has a clear view of how strained hiring has become. A large share of employers today report difficulty finding skilled talent. At the same time, candidates expect more transparency, quicker feedback and flexibility in how they engage with employers.

Hubert enters this picture as a specialist in structured digital interviewing. The startup has built tools that allow candidates to complete interviews online, at any time, while being assessed against consistent criteria. Instead of relying on informal screening calls or resume filters, its system focuses on standardized questions tied directly to job requirements. The idea is to bring more consistency to early-stage hiring.

The partnership brings these capabilities into ManpowerGroup’s global operations. AI-powered interviews will now support the first stage of screening, helping recruiters identify qualified candidates earlier in the process. This does not replace recruiters. Final decisions and contextual judgment remain with experienced hiring professionals. What changes is the speed and structure of the initial assessment.

For employers, this could mean earlier visibility into job-ready talent and less time spent on manual screening. For candidates, it offers more flexibility. A significant portion of interviews on Hubert’s platform are completed outside regular office hours, allowing applicants to engage when it suits them. That flexibility can make a difference in competitive labor markets where timing matters.

The collaboration is also positioned as a step toward reducing bias. By evaluating each candidate against the same transparent standards, the process becomes more consistent. While no system can remove bias entirely, structured assessments can reduce the variability that often comes with unstructured interviews.

At its core, the partnership addresses a gap many large organizations are facing. They need scale and speed, but they cannot afford to lose the human judgment that good hiring depends on. Manual processes are too slow. Fully automated systems can feel impersonal and risky. ManpowerGroup’s approach suggests a middle path, where technology handles repetition and structure and recruiters focus on potential and fit.

The move also reflects a broader shift in the workforce industry. AI is no longer being tested on the sidelines. It is being built into the foundation of hiring operations. For established players like ManpowerGroup, the challenge is not whether to adopt AI, but how to do so responsibly and at scale.

By working with Hubert, the company is signaling that the future of recruitment will likely blend structured digital tools with human expertise. In a market defined by talent shortages and rising expectations, that balance may prove critical.